What is it behind arguably the most important feature of a Q&A site - Issue #3

And how it leads to false-positive/negative

With over 138,000 active subreddits out of 1.2 million as per July 2018, it’s almost impossible to have a perfect glance towards all the contents. Hence, which is why it has algorithms that personalized the content consumption, centered on the recommendation of others.

However, the question arises is, how vulnerable are those algorithms to every event happening around it - spamming, malicious and accidental +/-votes, which may affect the integrity of the algorithms itself. There had been various experiments took place to study this potential.

In this article, we will explore

The origin of the upvote/downvote mechanism

Experiments conducted to study the effect of it

The birth of a feature that universally powers every Q&A site in the world

Alexis Ohanian together with his counterpart, Steve Huffman founded the “front page of the internet” 14 years ago with the goal in mind to give users superpower to control what materialized on the site. However, it is not come easy to come out with what appears to be the universal features for every, if not all, Q&A site; the user-driven, algorithm-enhanced engine called “upvote” and “downvote”.

Before the finalization of this feature took place, they were already worked with various ways to find what could work best. Among others include BuzzFeed-like individual reactions that married to links posted on Reddit, “WTF” (no, it is not what you think of lol) which something that a user could click while reading the post was the most prominent one. Doing just that amped a prompt, requesting the user why she was feeling this way — and urging her to comment on the post.

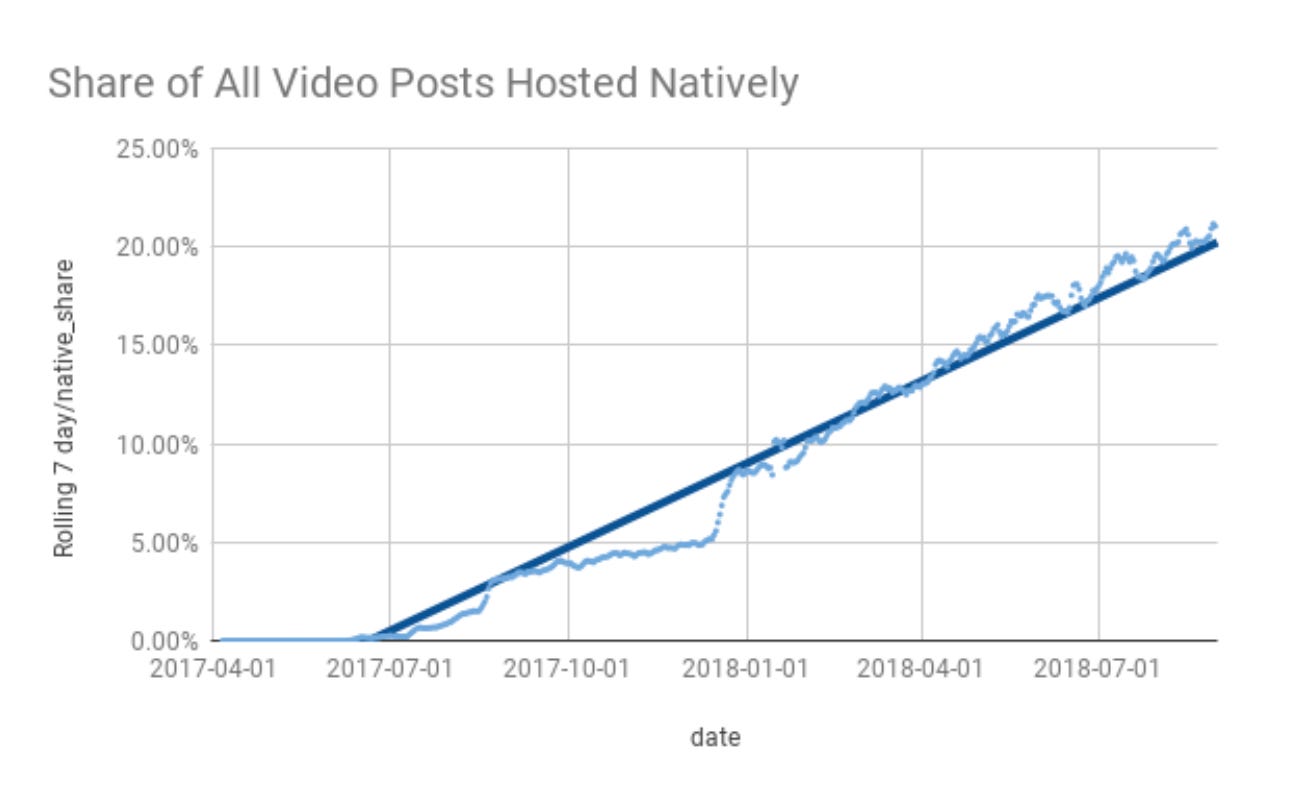

With native videos published over 400k hours daily on Reddit, added to 13 million hours of videos published every month, the company has seen an increase of 38% growth rate since the start of 2018. However, in the context of the social network universe, the amount of uploaded content alone doesn’t work. It would need to be accompanied together with the social interaction between users (hence the name itself). Thus, the idea of upvote and downvote exists - accelerate social interaction among the Redditors.

Even though the idea took off well, it does not come without any controversy. For startups like Uber, they were facing issues with independent contractor problems while its Silicon Valley-counterparts like Facebook and Google are having issues with user privacy. However, in the case of Reddit, it was about making a better way to come out with an algorithm that better manages the offhand, accidental, or even malicious liking, up- or down-voting. But why is it even an issue? Here is why.

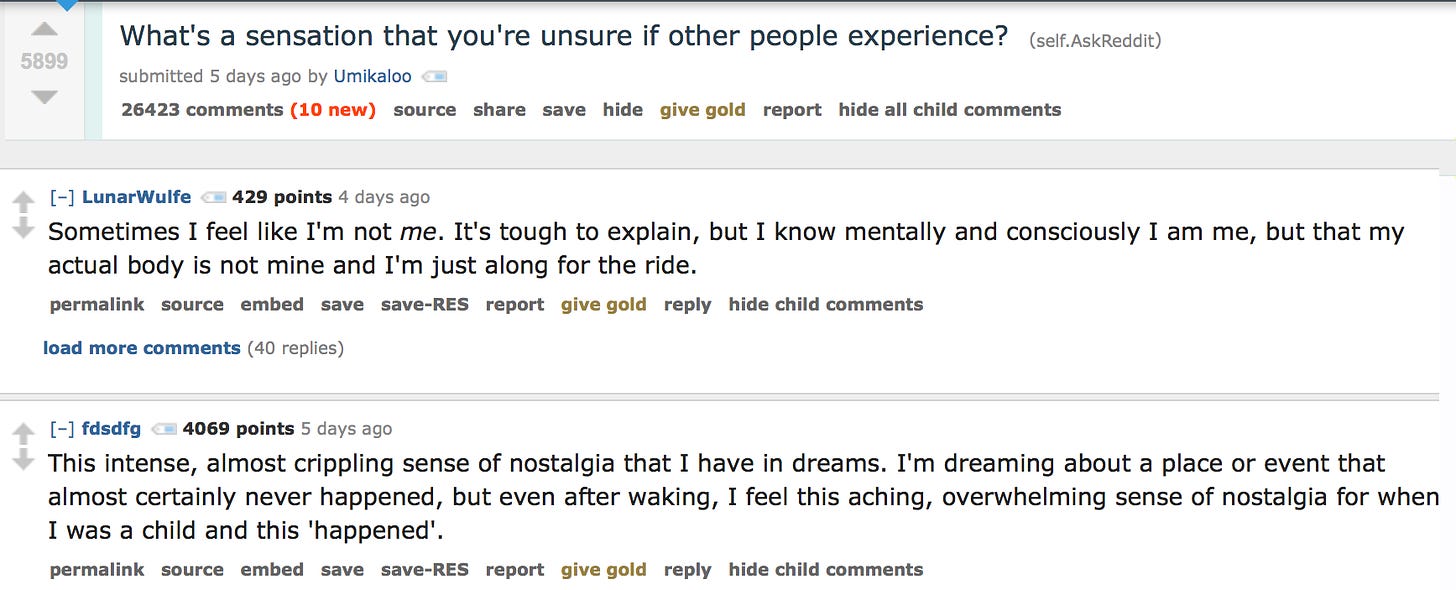

Forever and a day, the views of others have been strongly driven by human beings, but the continuous number of available social media sites that have come to control our lives may be making this “social evidence” an issue. Hence, to further prove this hypothesis, researchers have conducted studies to understand the impact of each upvote, downvote - or even no vote, towards the decision of human’s daily lives.

Experiment #1 - Social Influence Bias: A Randomized Experiment

Through a randomized experiment, which involved a collaboration with a social news aggregation website on which readers could vote and comment on posted comments, the effect of collective information was examined.

The study was conducted over 5 months period which involved monitoring 100,000+ comments made by users. All these were viewed over 10 million times and rated 300,000+ times. For control measure, the researchers had devised the structure in a way that would spontaneously be displayed with either positive, negative or no votes at all.

The result showed that previous ratings generated a significant bias in individual rating actions. In fact, the probability of a comment to receive another upvote is 32% more likely than the control group, even only a single upvote was given to the comment before publication. While those comments that received an initial upvote, the overall rating they received saw an increase of 25% than the control group.

Experiment #2 - Identifying and Understanding User Reactions to Deceptive and Trusted Social News Sources

Another study, this time it was conducted by two researchers from the University of Notre Dame - Maria Glenski and Tim Weninger, and Svitlana Volkova from Pacific Northwest National Laboratory. The goal behind this experiment was to better understand how users responded across two popular, and very distinct, social media platforms in the context of trusted and deceptive news sources.

In order to achieve that, they:

came out with a model to rank users’ reactions into one of nine types.

computed the speed and reaction type for trusted and deceptive news sources of about 6.2 million Reddit comments and 10.8 million Twitter posts.

The sequel of the analysis showed significant gaps in the distribution of reaction types, as well as the way of reaction that happened for trusted versus deceptive news between Twitter and Reddit. Twitter users, for example, seldomly and slowly questioned the disinformation sources than they did with the trusted news sources. Besides, appreciation expressed among the Twitter users for disinformation sources was higher and quicker than towards trusted sources. On the other side, Reddit showed an equivalent outcome, however - reaction outcomes, although far less pronounced.

Experiment #3 - Rating Effects on Social News Posts and Comments

Another interesting experiment conducted, again by Maria Glenski and Tim Weninger. This time around, they investigated the effects that the editorial ratings had on online human action.

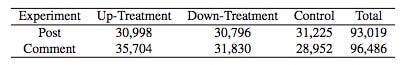

There were two methods involved in this experiment - Post and Comment.

Post-based Experiment

A computer program was executed every 2 minutes during the 6 months period between 1st September 2013 and 31st January 2014 which collected Reddit post data through an automated 2-step process and then chose to randomly upvote, downvote, or - as a control measure - leave it untouched.

Before giving it a vote, Maria and Tim waited up to an hour after a post first showed up to see whether the timing mattered. Overall, 93,019 posts were looked at by the researchers, then followed up four days later to determine the final scores of those posts.

Comment-based Experiment

A similar process like how the Post-based Experiment was being conducted, however, the differences were they were being separated this time around. In the end, 96,486 sampled comments resulted in this data collection.

The summary of the sample count for the data collected from the period of the study can be seen below:

Generally, the result showed that randomly upvoted posts scored slightly over 32 points, which meant 32 more upvotes than downvotes - or an increase of 11% than the control group while the posts that were randomly downvoted attained 5.2% lower than the controls.

Effect on political activity

Now it iss an exciting moment for the people of the United States as they are approaching the historical moment of the country once again - 2020 Election Day on the 3rd of November later. For both the Presidential candidates, this could be the most important moment of their lives gathering the support of the people on “upholding the nation’s agenda”. Given the fact that social media and election are two things that cannot be separated, it is worth seeing how it affected the prior election campaign that was full of controversy - the US Presidential Election 2016.

According to Rishab Nithyanand - data scientist and active Reddit user, political forum visitors that went to Reddit saw a rise in ties to controversial news sources in 2016. The same problems experienced by social media companies under scrutiny, like Google, Facebook and Twitter. But that was not the end.

What Nithyanand seemed to find was that Reddit was an important part of the chain in spreading "fake news" across social sites. Although it might seem that Reddit forums were blinkered, Brian Solis, a social media analyst at the research firm Altimeter, said that the site punches above its weight in impact on the Internet.

These findings for regular Redditors indicated that they were skewed of seeing posts posted by Redditors who also visited forums like r/nazi, r/killingwomen or r/antifatart any time they logged into their favorite political forums after December 2015 or even links to contentious news sources like beforeitsnews.com.

(Full study can be found here)

Takeaway

The proliferation of internet-driven knowledge has helped us to gradually sidestep old ways of obtaining the knowledge we need, which then seem tedious and time-consuming, now we can get what we need just at the click of a button. However, this also suggests that we are presented with enticing ways to undermine conventional gatekeepers of accurate knowledge. Frankly, how many of us, instead of looking at the actual text, don't just focus on what the Internet says about a government decision?

Not only does it make such deflect feasible and more likely to occur by relying more and more on social media and crowd-based opinion generators, it also increases the numerical reach of transmitting false views, whether deliberate or not.

If you’ve enjoyed reading this article, you can always reach me at aizuddinadli97@gmail.com. Any feedback will be much appreciated. If you liked what you read, please consider sharing or subscribing. See you in the next edition.

Credit: 1st image source - here; 2nd image source - here; 3rd image source - here; 4th image source - here; 5th image source - here; 6th image source - here

Cool article.